Think about a day at work when your team is full of energy and ideas. They solve problems quickly and work well together. And you? You’re helping them succeed and focusing on the big picture. Your job isn’t just about managing; it’s about leading in a new way.

So, how do we make this happen? How do we unite a group to form a strong team that does better than we ever hoped? The answer is in encouraging initiative.

In this blog post, I’ll discuss the 7 Levels of Initiative, an idea from Steven Covey’s research. It’s not just a way to check how well the manager is doing in the team; it’s about helping our teams be their best.

Theory about initiative

Understanding Initiative in Engineering Teams

The initiative is about taking action before being asked. It’s seeing a problem or opportunity and addressing it right away. In engineering, having initiative means being ahead of the game - thinking ahead, solving problems quickly, and constantly looking for ways to improve. It shows a team member is engaged, responsible, and ready to contribute creatively. In short, initiative is key for a team to innovate, adapt, and excel.

Everyday Initiative Beyond Big Changes

A common notion I often encounter is the belief that initiative is limited to the architecture changes or big team process changes and that this done, so there is no place to put more initiative. However, I believe the initiative starts and manifest in everyday situations, even within established frameworks of team work. The initiative isn’t just about making large-scale changes or redesigning project architectures. It’s often about the smaller, impactful actions that enhance team performance and project outcomes.

For instance, a team member might proactively arrange an immediate meeting to tackle a recurring issue, showing a keen sense of urgency and problem-solving instead of waiting for a scheduled retrospective meeting for the next week. Another example is a team member taking the lead on an small training session to share knowledge about a new tool.

Spotting Low Initiative

In a large team (or teams) setting, it’s vital to identify signs of low initiative among team members. Phrases like “That’s not my role,” “No one told me I should do this,” or “I have never done this before” are typical indicators. These statements suggest a reluctance to step out of a defined role or comfort zone, waiting for explicit directions rather than seeking opportunities to contribute.

This mindset can slow the team’s progress, especially in larger groups where individual initiative drives efficiency and innovation. Recognizing and addressing these signs through coaching or creating opportunities for members to take on new challenges is essential for nurturing a proactive and dynamic team environment.

7 levels of initiative

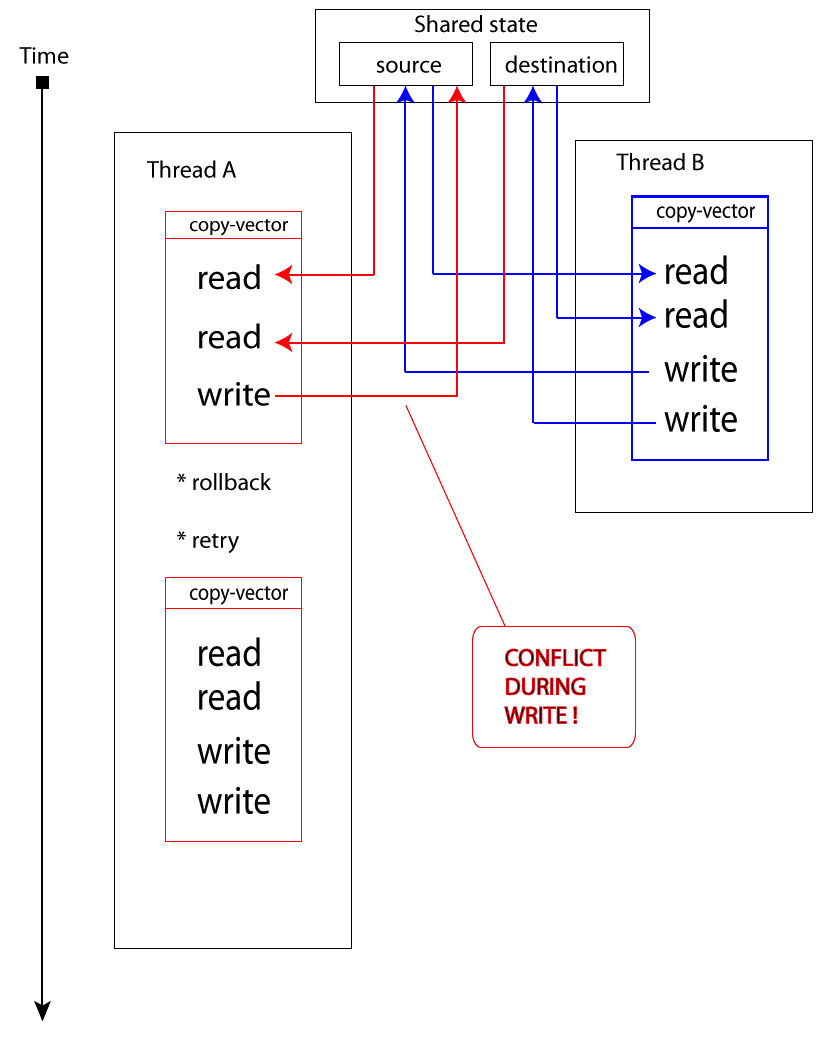

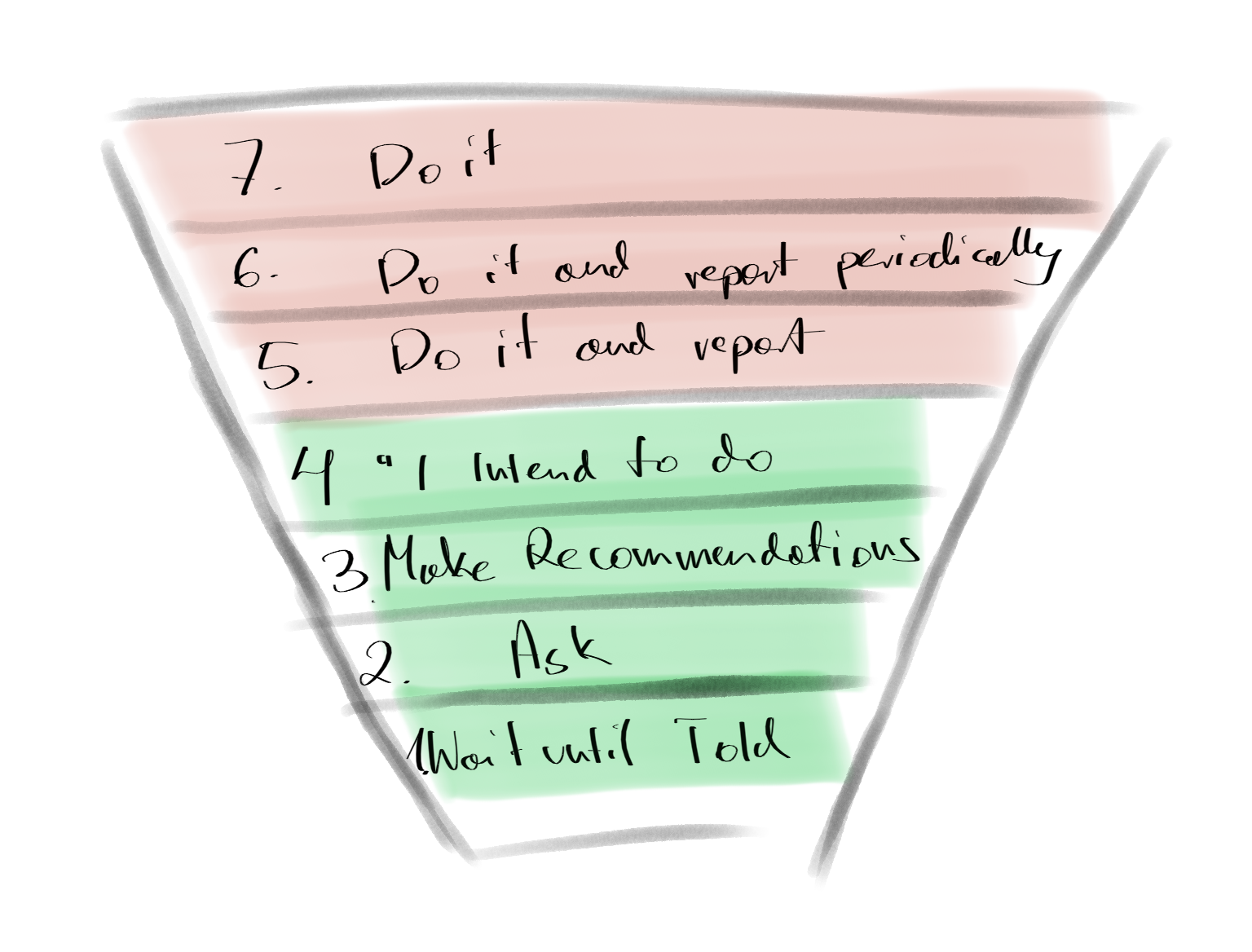

Stephen R. Covey’s classification of initiative comprises 7 distinct levels, each representing a different degree of decision-making autonomy. It’s crucial to recognize the shift in decision-making power between levels 1-4 and 5-7, with the latter starting with a ‘do it’ approach.

- In Levels 1-4, there’s constant communication, with the shift being in who initiates action: you or your direct report.

- Levels 5-7 represent a transition to less control, requiring significant trust in your reports. These levels lack a ‘final check’ by the manager, tech lead, or team, and employees need significant courage to take action and handle the potential risks.

1. Wait Until Told

Here, team members are entirely reactive, depending on direct instructions. Typical of new hires or in structured environments, this level shows no proactivity. Such individuals don’t seek what to do next or show curiosity, solely waiting for external input.

2. Ask

At this level, team members start to show interest by seeking tasks, indicating a move toward greater engagement. Questions like “What can I do next?” or “Why are we working this way?” arise, directed at you, the team, or themselves.

3. Make recommendations

A significant shift occurs here. Engineers not only ask questions but also provide answers, leading to idea generation. The key is their ability to propose concrete changes to the status quo, offering multiple potential solutions to a problem.

4. “I intend to do”

Adding to the previous level, an engineer with multiple solutions for a problem can choose one, devise an action plan, and inform others of their intent. The plan may vary in scope, from a few days of changes to significant team process adjustments or large refactorings in the code base or larger architecture changes.

5. Do it and report immediately

Autonomy increases as team members act independently and report back immediately. This level reflects trust and a solid grasp of responsibilities. It’s evident when someone updates you with results and conclusions from their independent work.

6. Do it and report periodically

Team members at this level take complete ownership of tasks, making decisions independently. They align closely with the team’s vision, requiring minimal oversight. Periodic updates are agreed upon to monitor progress.

7. Do it

The pinnacle of initiative, where engineers act independently and only communicate the completion of their tasks or changes, bypassing progress updates to managers or tech leads.

Refining 7 levels of initiative

The Role of Initiative Levels in Delegation

Understanding the levels of initiative is crucial in delegating tasks effectively. When I assign a task, I also relate to the initiative level which helps set clear expectations. Early in my role as an engineering manager, I learned this the hard way. My delegation approach was vague, leading to varied outcomes based on the initiative levels of my team members. For instance, asking someone to work on “feature X” without specifying the expected level of initiative could result in a barrage of questions (level 2), a list of proposed solutions (level 4), or even a completed feature (level 7).

This experience taught me the importance of clear communication and setting precise expectations about the desired level of initiative for each task.

Characteristics at Higher Levels of Initiative

When dealing with higher levels of initiative, several factors become increasingly important:

- Trust between the manager and the engineer needs to be higher.

- The engineer’s competence and skill level should be higher (not only technical but also leadership as the delegated task is rarely work-alone task).

- There should be a noticeable willingness and eagerness to engage with the task. Especially the willingness to take calculated risks becomes more pronounced.

- Creativity in approaching and solving problems is essential.

Embracing Ownership and Accountability

At higher levels, accountability plays a significant role. Often, people may shy away from accountability, possibly due to fear of failure or lack of confidence. However, true ownership of a task requires being accountable and making decisions. Being accountable means not just delivering what is agreed upon but also proactively seeking ways to enhance the product and improve processes. A proactive approach and higher levels of initiative are fundamental to this ownership.

Navigating Between Levels in 1-on-1s

In my 1-on-1 meetings, I aim to challenge my team members to ascend to higher levels of initiative - of course, tailored to each individual’s capabilities. However, it’s important to recognize when someone might be pushed too quickly to a higher level. For instance, if we agree on level 6 but the engineer struggles and makes mistakes, it’s crucial to be able to step back to a lower level of initiative. This adjustment should be clearly communicated, specifying the duration and method of this change. Such flexibility and guidance are key to effectively nurturing and developing each team member’s potential.

Three Strategies to increase the initiative

To boost initiative within a team, focus on these three strategies:

- Setting clear expectations.

- Empowering decision-making

- Rewarding initiative.

Clear Expectations

It’s essential to communicate that initiative and proactive behavior are important. Clearly define these behaviors and how they align with the team’s goals and the overall project vision. Misunderstandings often arise when managers expect initiative but don’t clarify what constitutes ‘good performance’ or ‘desirable behavior.’ In my experience, discussing the ‘7 Levels of Initiative’ with team members clarifies these expectations. This is something I do with my teams. Engineers need to understand that their performance is evaluated not just based on task completion, like delivering features or fixing bugs, but also on their attitude and behavior.

Empowering Decision-Making

Enhancing initiative in your team means empowering them at various levels. Start by assigning meaningful tasks challenging and developing their skills, signaling trust and encouraging ownership. Foster an environment that values autonomy, allowing team members to make decisions and bring their ideas to fruition. Open communication channels encourage sharing ideas and feedback, and embracing calculated risk-taking, with failures seen as opportunities for learning, is crucial. I’ve shared this model and discussed this with my team. That’s why when we talk, it is easier to discuss daily topics.

Understanding individual motivators and ensuring alignment with team goals is important. Recognize that not everyone will jump from lower to higher initiative levels instantly; gradual progression is key, along with providing room for mistakes and learning.

Recognizing and Rewarding Initiative

Acknowledging and rewarding initiative is more effective than providing constructive feedback.

Consider my personal story: It was a warm and sunny day. My son (4 years old) was eager to wash our new car, as he was excited about it. We started with preparations. I asked him to prepare water with car shampoo as he enjoys playing with water (aligning the task to the motivators). Unfortunately, he used the entire bottle of shampoo instead of a few drops. Rather than penalizing him, which could discourage future help, guidance is more effective. Truth to be told. A bottle of car shampoo is not expensive. He was so passionate about cleaning the car itself that he needed a strong reward for the whole work.

Similarly, positive feedback and rewards foster a culture of initiative in a professional setting. Rewards can be financial, like bonuses, but often more impactful are

- Personal acknowledgment in 1-on-1s

- Faster promotion to a higher level

- Public praise

- Granting more autonomy

- Assigning more significant, impactful projects

- Providing opportunities to tackle more interesting and challenging tasks

Manager perspective

Initiative in Performance Reviews

In the context of performance reviews, understanding initiative goes beyond just completing a task well. As a manager, I place significant emphasis not only on the outcome of the task but also on the level of engagement and involvement demonstrated by the team members in accomplishing it.

The initiative is reflected in how an individual approaches a task - their ability to think proactively, anticipate potential challenges, and engage with the task creatively and enthusiastically. It’s about showing eagerness to take on responsibilities, to contribute ideas, and to go the extra mile. When reviewing performance, I look for signs of this deeper engagement: Has the team member shown a willingness to learn and adapt? Have they taken steps to improve processes or collaborate effectively with others? The important aspect is that this relates to the task, not the engineer. Each engineer is assigned to many tasks; the initiative level is correlated with the task, not with the particular engineer.

In essence, initiative in performance is about the attitude and approach towards work, not just the technical execution of tasks. It’s this comprehensive view of performance that truly reflects a team member’s contribution and growth potential.

Addressing Lack of Initiative in Behavioral Interviews

When evaluating candidates, I’ve encountered at least two times engineers who excel in coding and system design, yet exhibit a noticeable need for more initiative in their behavior, thus having to be rejected.

This presents a unique challenge, especially in roles where proactive engagement and the ability to drive projects independently are crucial (especially senior roles). During the behavioral interview, it becomes apparent that while they possess strong technical skills, their approach to problem-solving and project management might be more reactive than proactive. This gap is significant because, in dynamic work environments, the ability to anticipate challenges, propose solutions, and take charge of situations is as valuable as technical proficiency. Addressing this during the interview involves probing deeper into their past experiences and scenarios where they might have taken the initiative. It’s also about evaluating their potential to develop this skill, considering the team’s current dynamics and the support structure available to nurture such growth.

Summary

In this post, we explored what makes a great engineering manager: seeing your team work well on their own. We talked about the 7 Levels of Initiative from Steven Covey’s ideas, showing how important it is for team members to take action on their own, from big project changes to small daily tasks.

We learned that it’s key to be clear when you give tasks to your team, so they know what you expect from them in terms of taking charge. Higher levels of initiative need trust, skill, willingness, and creativity. It’s also about being okay with taking risks and being responsible for your work.

When looking at how well team members are doing, it’s not just about finishing tasks. It’s also about how they approach their work and if they’re willing to try new things. For bigger teams, it’s important for each person to manage their own work because the manager can’t guide everyone all the time.

Lastly, we saw that in job interviews, it’s important to find people who can think ahead and solve problems on their own, not just those who are good at coding.

In short, for a team to be successful, everyone needs to be able to think for themselves and be willing to take on new challenges. This helps the team work better and makes the job of an engineering manager rewarding.

]]>